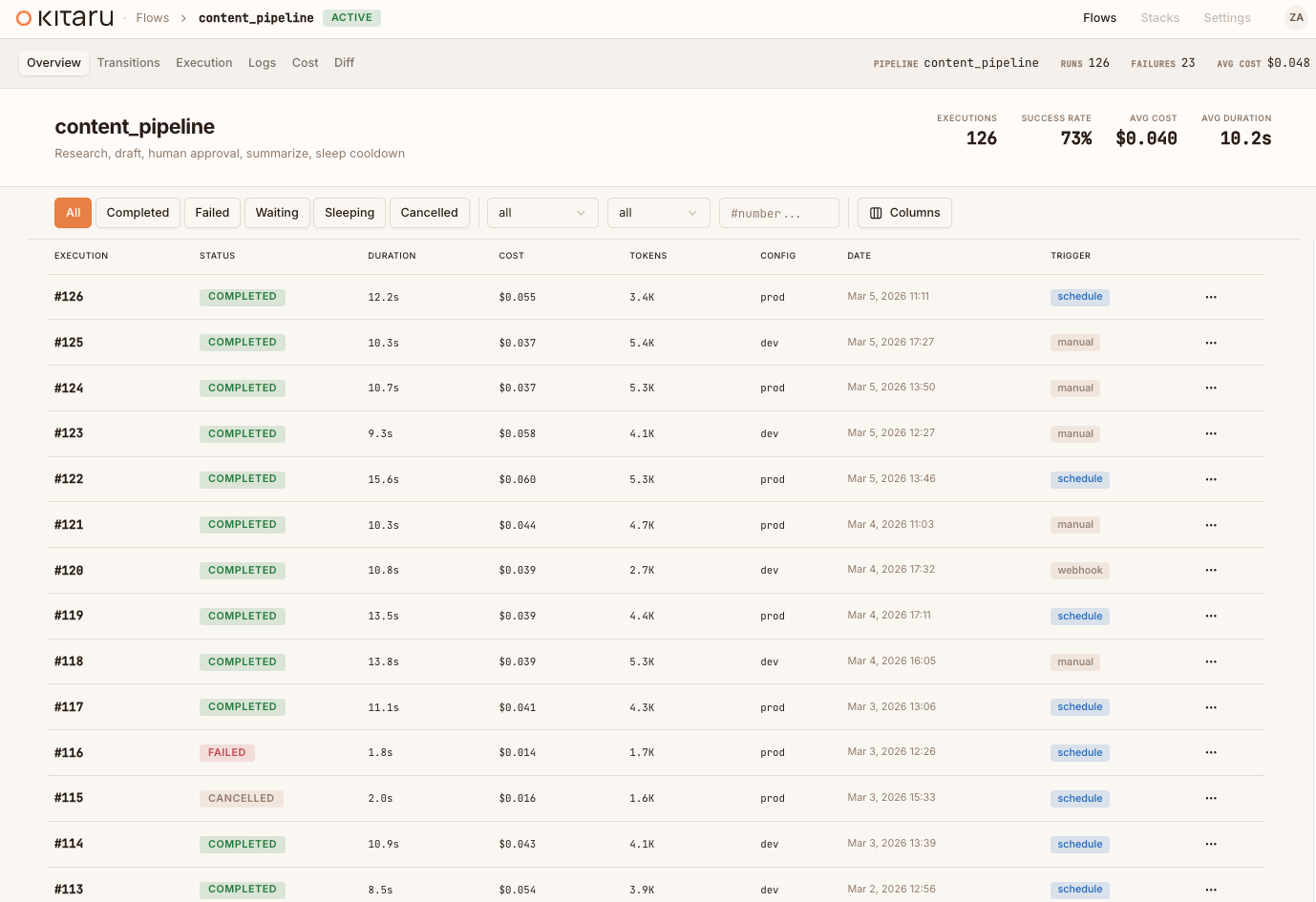

The open agent runtime.

Replay, don’t guess. Rerun production runs against new models, prompts, or skills. Compare evidence. Ship the variant that wins.

pip install kitaru The inner harness is getting absorbed. The outer runtime is still missing.

Models and SDKs increasingly own the inner loop — call, tool, observe, repeat. The layer around it stays unsolved: recovery, sandboxes, approvals, replay, cheap waiting. Keep your SSO, RBAC, cloud accounts, and agent SDKs. Kitaru fills the gap.

Agent died at hour 11? Resume from hour 10.

Every durable checkpoint persists its inputs and outputs. Fix the failure and replay from the boundary that broke instead of re-running every model call and tool call upstream.

The brain and the hand should not share a process.

Run risky tools in controlled execution targets while the durable runner owns state, replay, and policy hooks. A sandbox failure becomes one failed checkpoint, not a lost agent.

Wait on humans, webhooks, or agents without burning compute.

wait() suspends the run and releases the worker. When the input arrives minutes, hours, or days later, Kitaru rehydrates the execution and continues.

Kitaru isn’t an agent platform. It’s the runtime layer your platform runs agents on.

Kubernetes can restart a pod. It cannot replay an agent from checkpoint 10 or wait three days for a human approval without burning compute.

Bundled agent platforms ship one model, one harness, one cloud. Kitaru is the open assembled stack — same primitives, your stack.

Open source. Self-hosted. No lock-in to a model, harness, or platform.

Keep the inner harness. Add the outer runtime.

Keep the model loop your team already picked. Wrap the boundaries that matter — model calls, tool calls, approvals, and long-running phases — with durable state and replay.

from agents import Runner

from agents.sandbox import SandboxAgent, Manifest

agent = SandboxAgent(

name="Compliance Reviewer",

model="gpt-5.4",

default_manifest=manifest,

)

result = await Runner.run(agent, task)from kitaru.adapters.openai_agents import KitaruRunner, OpenAIRunRequest

from agents.sandbox import SandboxAgent, Manifest

agent = SandboxAgent(

name="Compliance Reviewer",

model="gpt-5.4",

default_manifest=manifest,

)

runner = KitaruRunner(agent, checkpoint_strategy="calls")

result = await runner.run(OpenAIRunRequest.start(task))from claude_agent_sdk import query, ClaudeAgentOptions

options = ClaudeAgentOptions(

system_prompt="You are a compliance reviewer.",

allowed_tools=["search_docs", "fetch_policy"],

)

async for msg in query(prompt=task, options=options):

process(msg)from kitaru import flow, checkpoint

from claude_agent_sdk import query, ClaudeAgentOptions

options = ClaudeAgentOptions(

system_prompt="You are a compliance reviewer.",

allowed_tools=["search_docs", "fetch_policy"],

)

@checkpoint

async def run_claude(task: str) -> list:

return [msg async for msg in query(prompt=task, options=options)]

@flow

async def review(task: str):

for msg in await run_claude(task):

process(msg)from pydantic_ai import Agent

agent = Agent(

"openai:gpt-5.4",

system_prompt="You are a compliance reviewer.",

tools=[search_docs, fetch_policy],

)

result = await agent.run(task)from kitaru.adapters.pydantic_ai import KitaruAgent

from pydantic_ai import Agent

agent = KitaruAgent(Agent(

"openai:gpt-5.4",

system_prompt="You are a compliance reviewer.",

tools=[search_docs, fetch_policy],

))

result = await agent.run(task)from anthropic import Anthropic

client = Anthropic()

def my_agent(task: str) -> str:

plan = analyze(client, task)

# crash here? everything above is lost.

result = execute(client, plan)

return resultfrom kitaru import flow, checkpoint

from anthropic import Anthropic

client = Anthropic()

@flow

def my_agent(task: str) -> str:

plan = checkpoint(analyze)(client, task)

result = checkpoint(execute)(client, plan)

return resultThe outer runtime production agents keep needing.

The model can own the loop. The runtime has to own what happens when the loop waits, fails, forks, or moves to remote infrastructure.

Pause. Release compute. Continue later.

Agents can wait on a human, another agent, or a webhook for hours or days. Kitaru persists the run, shuts down the worker, and rehydrates when input arrives.

Crash at step 6? Replay from step 6.

Every checkpoint writes real outputs to the artifact store. Fix the issue and replay from the failed boundary instead of re-burning upstream model and tool calls.

Keep the inner loop your team picked.

OpenAI Agents SDK, Anthropic Agent SDK, PydanticAI, LangGraph, or raw Python. Kitaru adds the runtime around the harness instead of replacing it.

Fan out work without losing the thread.

checkpoint.submit() dispatches branches concurrently. Each branch keeps its own durable boundary, so one failed search does not erase the rest.

The outer runtime is just Python.

import kitaru

from kitaru import flow, checkpoint

kitaru.configure(stack="prod-k8s")

@checkpoint

def research(topic: str) -> dict:

results = run_agent_search(topic)

kitaru.save("sources", results)

return summarize(results)

@checkpoint(runtime="isolated")

def write_draft(context: str, prev_id: str) -> str:

prior = kitaru.load(prev_id, "sources")

return kitaru.llm(

"Draft a report on: " + context,

model="gpt-4o",

)

@flow

def report_agent(topic: str, prev_id: str) -> str:

data = research(topic)

draft = write_draft(str(data), prev_id)

kitaru.log(topic=topic, words=len(draft.split()))

approved = kitaru.wait(

schema=bool, question="Publish?"

)

if approved:

publish(draft)

return draft@flow Top-level durable boundary for the agent run.

@checkpoint Persists output. Crash at step 3? Steps 1-2 never re-run.

kitaru.wait() Suspends the run. Resume when a human, webhook, or agent responds.

kitaru.llm() Resolves the model alias, injects the key, and captures usage.

kitaru.log() Structured metadata on every execution. Query it in the dashboard.

kitaru.save() Persist the real intermediate outputs your agent will need later.

kitaru.load() Retrieve saved artifacts from an earlier execution by ID.

kitaru.configure() Move the same flow from local dev to remote infrastructure.

From laptop session to enterprise runtime.

Run the same agent flow locally, then move it to Kubernetes, SageMaker, Vertex AI, or AzureML with artifacts in your own bucket.

uv add kitaru && kitaru init

The brain and the hand should not have to share a process.

The runner owns durable control flow — checkpoint order, state, retry, replay, resume, and wait. Execution targets do the work: inline, in an isolated job, inside a sandbox, or through an external tool. Checkpoints are the contract between them.

import kitaru

from kitaru import flow, checkpoint

@flow

def coding_agent(issue: str) -> str:

plan = analyze_issue(issue)

patch = write_code(plan)

# Pauses. Resumes when input arrives.

approved = kitaru.wait(

bool, question="Merge this PR?"

)

if approved:

merge(patch)

return patch Your agent died at hour 11.

Don’t restart from hour 1.

pip install kitaru Open source (Apache 2.0). Add the runtime around the agent you already have.